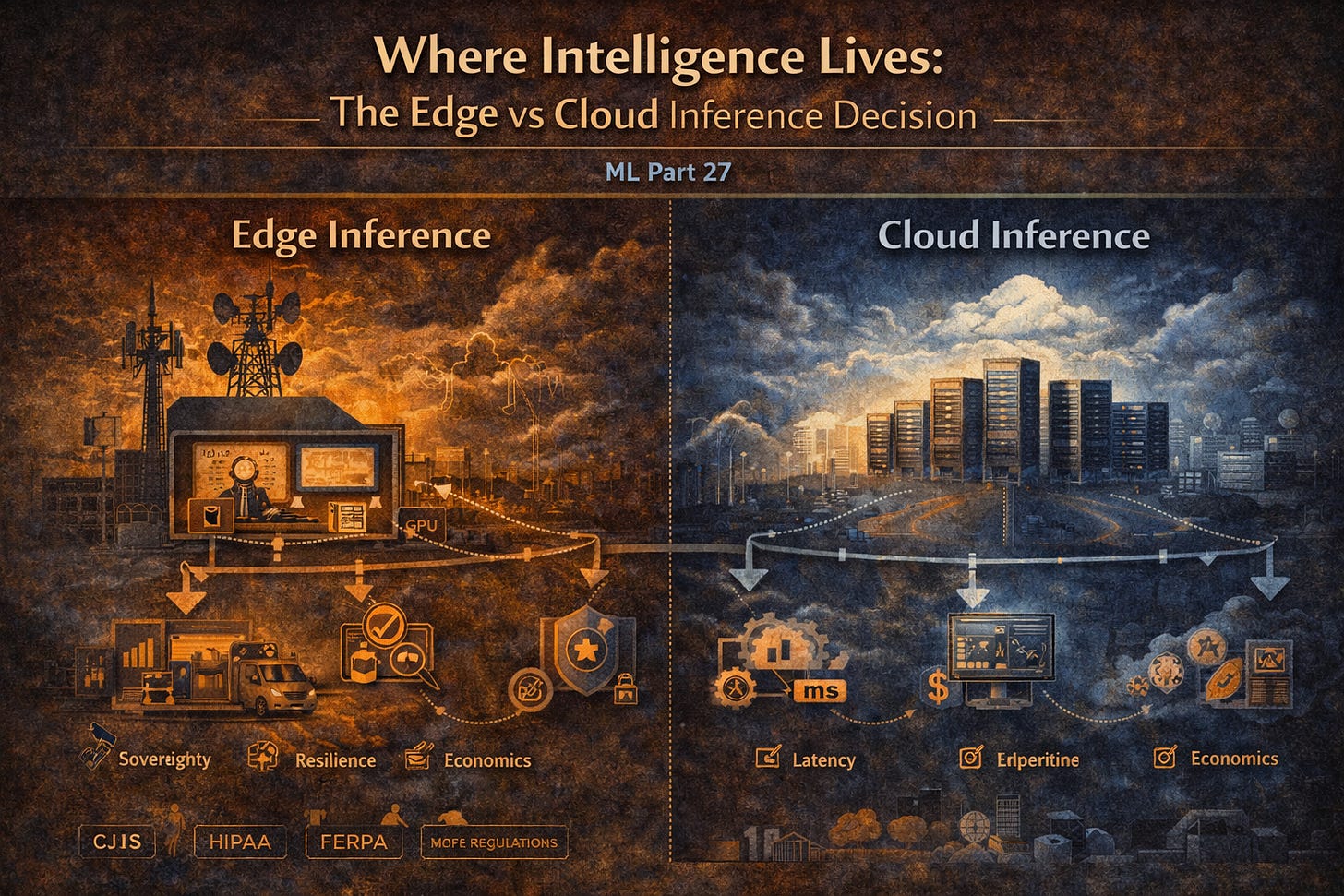

TL;DR: Edge machine learning runs inference on compute located close to where data originates. Cloud machine learning centralizes inference in data centers. The conventional argument for edge computing centers on latency, but genuine sub-10-millisecond requirements are rare outside autonomous vehicles and real-time industrial control. The real drivers are data sovereignty, operational resilience, and economics. The workloads that most need edge infrastructure are precisely the ones hyperscalers have structurally abandoned: regulated, mission-critical, and too small for enterprise account thresholds.

The standard case for edge deployment leads with latency. Autonomous vehicles need millisecond response. Industrial control systems cannot tolerate network round-trip times. These arguments are valid for a narrow class of workloads where physics genuinely dictates compute placement.

But genuine sub-10 millisecond requirements at scale are rarer than the vendor narrative suggests. Most enterprise workloads operate on decision cycles measured in seconds or minutes. A document processing pipeline, a fraud scoring system, a permit application reviewer, none of these fail because inference takes 200 milliseconds instead of 10. They fail because the network is down, because the data cannot legally leave the building, or because the cloud provider’s pricing model does not work at the customer’s scale.

Leading with latency is a technical answer to what is fundamentally a problem of sovereignty, resilience, and economics.

Sovereignty: When Data Cannot Leave the Building

The 911 triage system runs locally for three reasons. Latency is the least important of them.

The Criminal Justice Information Services (CJIS) security policy prohibits processing 911 call audio in multi-tenant public cloud environments without a compliance posture that a rural county 911 center with two IT staff cannot operationally maintain. The data cannot go to the cloud, not because the cloud is technically incapable, but because the compliance requirement is operationally infeasible at that scale.

Healthcare operates under equivalent constraints at higher stakes. A regional hospital running machine learning models for clinical decision support or patient triage processes data under HIPAA requirements, which creates real liability for cloud-based transmission of protected health information. Edge inference that keeps patient data within the facility’s own infrastructure eliminates the compliance surface rather than managing it.

Education adds a third layer. FERPA governs student records with data residency implications that make cloud processing politically and legally sensitive regardless of technical compliance status. A school district deploying machine learning for student support services is navigating board policy, parent expectations, and state data governance simultaneously. Local inference removes the exposure.

The pattern across 911, healthcare, and education is identical. The data cannot leave, not because of physics, but because of regulatory and operational realities that cloud deployment cannot resolve without creating compliance burden the customer cannot carry.

Resilience: The System Must Work When Infrastructure Fails

Emergency systems must operate during emergencies. Emergencies frequently involve infrastructure failures. The 911 scenario is not an edge case. It is the defining use case.

A hospital emergency department running cloud-dependent triage assistance loses that capability during the network outage caused by the same storm filling the waiting room. A public works system routing crews to storm damage loses route optimization when the storm takes down the WAN. The workloads most dependent on continuous operation are precisely the workloads most exposed to the network failures that accompany the conditions driving that operational demand.

Edge inference continues operating during WAN outages, cloud provider incidents, and network congestion accompanying major events. The semantic layer from Parts 14 through 24 directly participates in this resilience. Locally cached ontology definitions and knowledge graph assertions remain queryable during outages. Provenance tracking from Part 23 continues recording what the system knew and decided during the outage period, maintaining the audit trail that regulated environments require even when centralized systems are unreachable.

Economics: The Workloads Hyperscalers Will Not Serve

The third driver is the one the edge computing industry discusses least directly. Hyperscalers allocate their most capable compute to their largest accounts. A county 911 center, a rural hospital, a mid-size school district are not enterprise accounts by any hyperscaler definition. They will not receive current generation GPU allocation. They will not receive dedicated support engineering or the pricing flexibility that makes cloud economics work at scale.

What they will receive is shared infrastructure, spot instance availability, and egress pricing that makes continuous inference workloads economically unsustainable. A 911 center handling 200,000 calls per year peaks at five to six concurrent sessions. An NVIDIA L4 in local infrastructure handles 15 to 20 concurrent calls running Whisper for transcription and a quantized Llama 3.1 8B for intent classification. The workload is exactly sized for right-priced regional edge compute and exactly wrong for hyperscaler pricing models built around enterprise reserved instances and sustained high-volume GPU utilization.

This is where existing telecommunications infrastructure becomes directly relevant. Cable headends, central offices, and regional network points of presence represent compute-ready physical locations with power, cooling, and connectivity already in place. Many already have MSO customer relationships with the exact municipalities, hospital systems, and school districts that need edge machine-learning infrastructure. Repurposing that infrastructure for edge compute collocated with the customers it serves closes the gap between what hyperscalers will build and what regulated mission-critical workloads actually need.

Where the Semantic Layer Partitions

The knowledge graph infrastructure from Parts 14 through 24 partitions between edge and cloud using the federated query patterns from Part 20. Latency-critical knowledge, call classification ontology, local policy rules, operational status assertions, synchronizes to edge compute and remains queryable during network outages. Authoritative knowledge, master entity records, and regulatory policy definitions stay centralized and are queried when connectivity permits. Provenance generated at the edge during outages synchronizes to the central graph when connectivity is restored, maintaining a complete audit trail regardless of when the edge operated independently.

The machine learning stack powering these workloads is not lightweight. A 911 triage system runs transcription, intent classification, entity resolution against local knowledge graph assertions, and provenance logging in parallel on every call. As we covered in Part 25, Intelligent Document Processing is the engine underneath all three use cases. Real-time audio triage for 911, clinical decision support drawing on electronic health records, and student outcome modeling built on educational data are all IDP pipelines that run against domain-specific ontologies. When the network fails, the GPU and CPU in the local headend keep every layer of that pipeline operational. The inference does not degrade. The knowledge graph remains queryable. The audit trail continues uninterrupted. Edge compute is not a fallback. It is the architecture that makes these workloads possible in the first place.

In a CJIS-regulated environment, provenance continuity is not an architectural nicety. It is a compliance requirement.

Why This Matters

The edge-versus-cloud decision is made early, and its consequences are difficult to reverse. The right question is not edge or cloud. It is what does this system need to guarantee when conditions are not ideal, and where does inference need to live to provide that guarantee.

For the 911 center in January, the hospital during a network outage, and the school district navigating data governance requirements, the answer is local. Not because local is faster in milliseconds, but because local is the only architecture that keeps working when the alternative stops, satisfies the compliance requirements the customer cannot waive, and fits within the budget a hyperscaler will not touch.

The workloads that most need edge machine learning are not the autonomous vehicle fleets and high-frequency trading systems that dominate the edge computing narrative. They are the regulated, mission-critical, community-serving systems that compliance requirements have pushed out of multi-tenant clouds, and hyperscaler pricing has pushed out of enterprise tiers. Right-sized compute at the right location for the right workload is the only architecture that simultaneously satisfies all three constraints.

In Part 28, we look at machine learning in multi-agent systems, how prediction engines power autonomous coordination, and why federated knowledge is the missing infrastructure layer that most multi-agent deployments discover too late.

#MachineLearning

#EdgeML

#CloudML

#MLInfrastructure

#DataSovereignty

#EnterpriseAI

#EdgeComputing