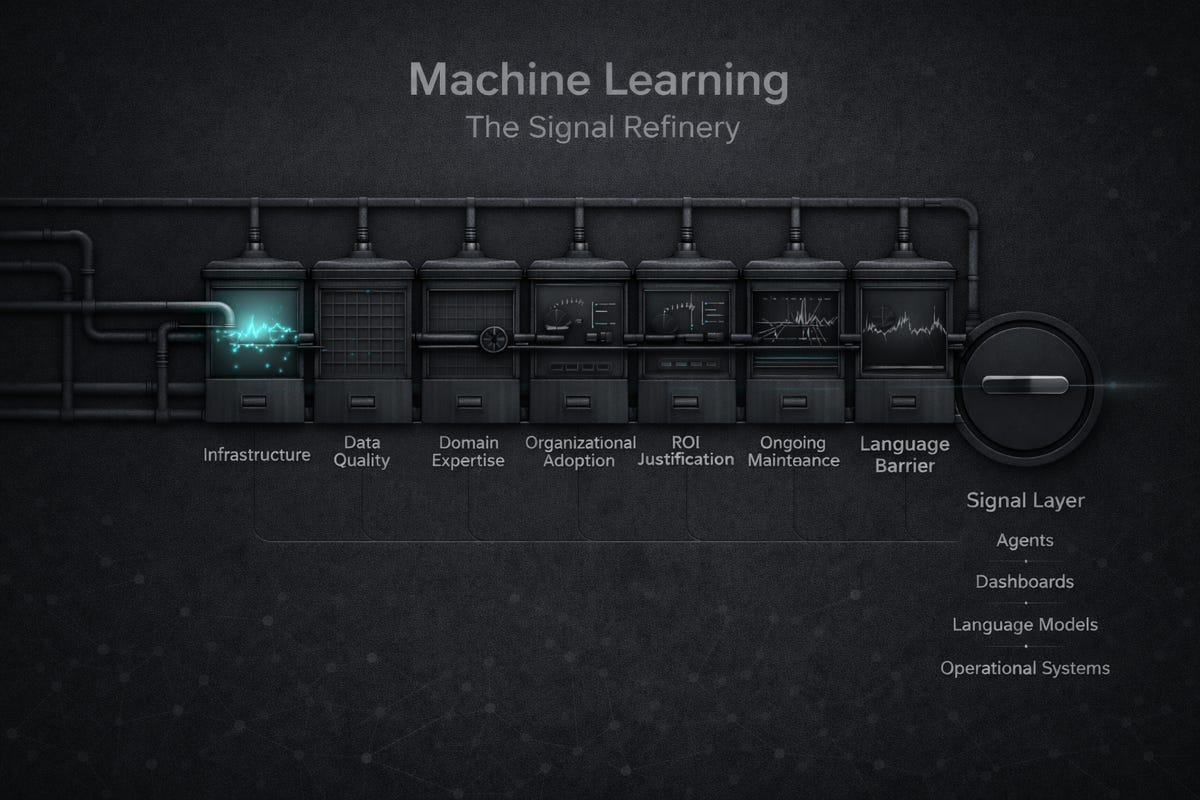

TL;DR: Production ML systems require five infrastructure layers that most technical leaders never see: data pipelines, feature stores, model registries, monitoring systems, and deployment platforms. Understanding this stack explains why ML projects that work in development fail in production, and why hiring a data scientist doesn’t automatically deliver business value.

ML runs on the same infrastructure foundation as any production system: compute, storage, networking, and monitoring. The difference lies in what sits on top of that foundation: the layers that turn compute into a pattern-matching engine that processes continuous data streams for different use cases and actions.

Traditional applications deploy code that runs predictably. ML systems deploy models that degrade over time as data patterns shift. That difference drives five additional infrastructure layers that manage model-specific complexity.

The Five Layers Production ML Actually Needs

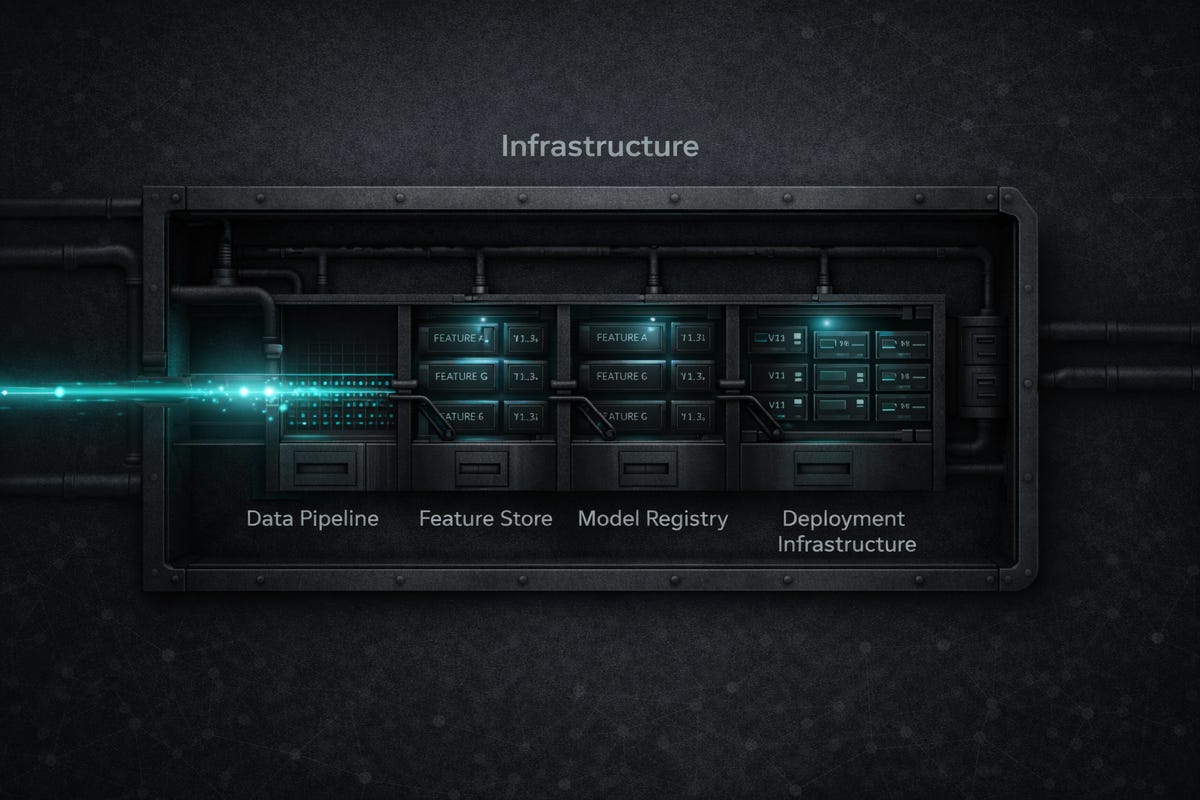

Data Pipelines

ML models don’t run on raw data. They run on engineered features extracted through validated pipelines.

Fraud detection does not learn from transaction logs. It learns from features such as transaction amount relative to a user’s 30-day average, time since the last transaction from a device, and merchant category compared to purchase history.

Tools like Apache Airflow or Prefect orchestrate these transformations. They extract data from source systems, validate quality, engineer features based on domain logic, and load processed data where models can access it. When pipelines break, models stop learning from fresh data, and accuracy degrades.

Feature Stores

Feature stores, such as Feast, Tecton, and Hopsworks, ensure features used during training match features used during prediction. This prevents the common failure mode in which a model achieves 95% accuracy in development but then drops to 70% in production because features were implemented differently.

Feature stores also serve features at prediction time with low latency, handle time-travel queries for training on historical data, and track feature versions.

Model Registries

Production environments run multiple model versions simultaneously.

Tools like MLflow, Weights & Biases, or Neptune.ai track which models are deployed where, store training metadata and performance metrics, and enable A/B testing between versions.

Without registries, you get models named “fraud_final_v3_actually_final.pkl” scattered across directories with no deployment record. When new models underperform, you need the ability to roll back to previous versions instantly.

Monitoring Systems

Models trained on historical data implicitly assume the future resembles the past. When patterns change, accuracy degrades. Tools like Arize, Evidently, or Fiddler detect when input data distributions shift, prediction outputs drift, or accuracy declines before business metrics suffer.

A demand forecasting model trained on pre-pandemic shopping behavior failed during pandemic disruptions because customer patterns shifted dramatically. Without monitoring, you discover model failures after business outcomes deteriorate.

Deployment Infrastructure

Kubernetes-native tools like Seldon Core or KServe handle model serving (exposing models as APIs), scaling based on request volume, routing traffic to specific versions, and automatic recovery from failures.

Deployment includes managing model dependencies, GPU resources if needed, and ensuring prediction latency meets business requirements.

Why This Infrastructure Matters

ML systems differ fundamentally from traditional software.

· Traditional software: write code, test it, deploy it, it works consistently over time.

· ML systems: train a model, deploy it, watch performance degrade as data patterns change, then rely on infrastructure to detect degradation and retrain automatically.

The model works in development, but doesn’t survive production without the infrastructure to grow with and sustain it.

What This Means for Planning

When evaluating ML projects, model training is the smallest piece. Budget for data engineering, MLOps infrastructure, and ongoing maintenance. A data science team can build an accurate model in days. Engineering teams often need months to build infrastructure that keeps that model accurate in production.

This is why “we hired a data scientist, why isn’t ML working?” fails. Data scientists build models. Models need infrastructure to empower production.

Closing

The production ML stack - data pipelines, feature stores, model registries, monitoring, deployment - separates ML demos from ML systems delivering value over time. These layers exist because ML has operational requirements that traditional software infrastructure does not address.

#MachineLearning

#MLOps

#AIInfrastructure

#EnterpriseAI

#AIStrategy

#DataEngineering